Thread Management

Download MultiThreading in .NET 2.0

Why Threads?

Some years ago I saw a letter-to-the-editor in response to the need of a multitasking system, the writer said “I don’t care about multitasking because I can only do one thing at a time.” Really? Does this person only do one thing at a time? This person continues with “I finish my Word document, print it, fire up my modem to connect to the Internet, read my e-mail, and go back to work on another document.” Does this person efficiently use his time? Many of us might suggest that this person could fire up his modem while the printer is printing, or work on another document while the previous one is being printed. Good suggestions, indeed. In fact, we are multitasking many tasks in our daily life. For example, you might watch your favorite TV program or movie and enjoy your popcorn. Or, while you are printing a long document, you might read a newspaper or company news. There are so many such examples demonstrating that we are doing two or more tasks simultaneously. This is a form of multitasking! In fact, multitasking is more common in industry. While each worker of an assembly line seems working in a sequential way, there could be multiple production lines, all of which perform the same task concurrently. Moreover, the engine assembly lines produce engines while the other lines produce other components. Of course, the car assembly lines run concurrently with all of the other lines. The final product is the result of these concurrently running assembly/production lines. Without this type of “parallelism” Detroit would not be able to produce sufficient airplanes and tanks for WW II and enough number of automobiles to fulfill our demand.

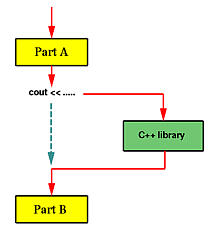

Unfortunately, before you learn how to split your program into multiple execution threads, all programs you wrote contain a single execution thread. The following diagram shows an example. Suppose we have a program of two parts, Part A and Part B. After Part A finishes its computation, we use some cout statements to print out a large amount of output. As we all know, when a program prints, the control is transfered to a function in C++’s library and the execution of that program is essentially suspended, shown in dashed line in the diagram, until the printing completes. Once this (i.e., printing) is done, the execution of the program resumes and starts the computation of Part B. Is there anything wrong with this? No, we are used to it and we are trained to do programming in this way ever since CS101. However, is this way of programming good enough in terms of efficiency? It depends; but, in many situations, it is not good enough.

If Part B must use some data generated by Part A, then Part B perhaps has to be executed after the output of cout completes. On the other hand, in many situations, Part A and Part B are independent of each other, or one may slightly rewrite both parts so that they do not depend on each other. In this case, Part B does not have to wait until cout completes. In fact, this is the key point! Therefore, before the execution of Part A, the program can be split into two execution threads, one for Part A and the other for Part B. See the diagram below. In this way, both execution threads share the CPU and all resources allocated to the program. Moreover, while Part A is performing the output which causes Part A to wait, Part B can take the CPU and executes. As a result, this version is more efficient than the previous one. Moreover, in a system with more than one CPUs, it is possible that the system will run both Part A and Part B at the same time, one on each CPU.

In real programming practice, a program may use an execution thread for handling keyboard/mouse input, a second execution thread for handling screen updates, and a number of other threads for carrying out various computation tasks.

Example: Quicksort

The quicksort algorithm consists of two steps in each recursion. First, the partition step divides the input array segment into two segments such that all elements in the left segment is smaller or equal to all elements in the right segment. Second, the sorting step simply sorts the left segment and the right segment. After these two steps complete, the input segment is sorted. While it is not so obvious if the partition step can have multiple execution threads, one can split the execution of the sorting step into two, one for sorting the left segment while the other for sorting the right one. This is shown in the diagram below:

Example: Merging

Consider another simple problem. Suppose we have two arrays a[ ] and b[ ] of n elements each. For simplicity, we assume that all of these 2n elements are different. Our job is to merge these two arrays into a sorted one. Everyone who took a data structures course knows how to do it; however, let us look at the same problem from a different angle.

Take an element from array a, say a[i]. We know that it is larger than i-1 elements of a. If we can figure out how many elements of b that are smaller than a[i], we will be able to know the exact location of a[i] in the sorted array. This is illustrated in the following diagram:

With a slightly modified binary search, we can easily determine the location of a[i] in array b. There are only three possibilities:

With a slightly modified binary search, we can easily determine the location of a[i] in array b. There are only three possibilities:

- a[i] is less than b[0]: In this case, a[i] is larger than i-1 elements in a and smaller than all elements in b. Therefore, a[i] should be in position i of the sorted array.

- a[i] is larger than b[n]: In this case, a[i] is larger than i-1 elements in a and n elements in b. Therefore, a[i] should be in position i+n of the sorted array.

- a[i] is between b[k-1] and a[k]. In this case, a[i] is larger than i-1 elements in a and k-1 elements in b. Therefore, a[i] should be in position i+k-1 of the sorted array.

After the main program reads in both arrays, it can split itself into 2n execution threads, each of which handles an element in a or in b. Each of these execution threads determines its position in the merged array and writes the values into the corresponding location. After this, we will have a merged array! Thus, we use 2n threads, each of which takes O(log2(n)) comparisons to get the job done. In the conventional serial case, we use one execution thread which uses O(n) comparisons to merge the arrays.

Example: Matrix Multiplication

Another interesting application is the multiplication of two matrices. Suppose we have two matrices Am×k (m rows and k columns) and Bk×n (k rows and n columns) and want to compute the product of A and B into a matrix C of m rows and n columns. The entry of C on row i and column j is the sum of the products of the corresponding elements on row i of matrix A and column j of matrix B as shown below:

How can we use multiple execution threads to solve this problem? We notice that the computation of Ci,j is independent of the computation of any other entries of matrix C. Because of this, after matrices A and B are read in, the main program can split m×n execution threads, one for each entry of matrix C. Each of these execution threads computes the products of the corresponding elements, sums them up, and stores the result into matrixC.

How can we use multiple execution threads to solve this problem? We notice that the computation of Ci,j is independent of the computation of any other entries of matrix C. Because of this, after matrices A and B are read in, the main program can split m×n execution threads, one for each entry of matrix C. Each of these execution threads computes the products of the corresponding elements, sums them up, and stores the result into matrixC.

It requires k multiplications to compute a single entry of matrix C. Since there are m×n entries in C, the program with only one thread uses m×n×k multiplications. On the other hand, in the above scheme, each thread uses kmultiplications and there are m×n threads. If we have only one CPU, the multiple execution threads version may not be as efficient as the single execution thread one; however, if there are more than one CPUs, each of these CPUs may be assigned to a number of execution threads and the execution efficiency is higher. In the extreme case in which we have m×n CPUs to use, because all execution threads run at the same time, it only takes the time to compute one entry to complete the whole matrix multiplication. Thus, it is m×n times more efficient than the single execution thread version.

By now, you perhaps have had a good feeling of why splitting a program into multiple execution threads may increase the execution efficiency. However, just like in the movie Multiplicity, creating too many execution threads may lead to a chaotic situation because in addition to splitting a program into multiple execution threads these threads must communicate with each other properly in order to work together. Thus, in addition to learning the way of creating execution threads, we also have to learn the way of managing threads and the way of thread synchronization.

The above examples may look a little unrealistic and their benefits seem only about program efficiency. There are other benefits of using multiple execution threads.

There are four basic thread management operations: thread creation, thread termination, thread join, and thread yield.

Thread Creation

We have discussed the creation of threads in a above page. Basically, we can split the execution thread into two. After this, both threads execute concurrently. The creating thread is the parent thread, and the created thread is a child thread. Note that any thread, including the main program which is run as a thread when it starts, can create child threads at any time. In the following diagram, Thread A runs initially. Sometime later, it creates Thread B as indicated by a yellow dot. After this creation, Thread A and Thread B runs concurrently. Later on, Thread A may create one more thread Thread C. After Thread C is created, there are three threads running concurrently, all of which compete to use the CPUs. However, which thread is run at a particular time is not known to any one of them. The quicksort example discussed on a above page employs this scheme, where Thread A receives an array segment, partitions it into two segments, creates Thread B to sort the left segment, and then creates Thread C to sort the right one. Or, after the given array segment is partitioned into two, Thread A creates Thread B to sort the left segment and sorts the right segment by itself. In this way, two threads, one parent – Thread A – and one child – Thread B – would be sufficient.

In the matrix multiplication example, the main thread (i.e., the main program) must create a number of threads, one for each entry of the resulting matrix. A possibility is shown below. Two for statements are used to create m×n threads. We shall see more examples that dynamically create threads later.

Thread Termination

For most of the cases, threads are not created and run forever. After finish their work, threads terminate. In the quicksort example, after both array subsegments are sorted, the threads created for sorting them terminate. In fact, the thread that creates these two child threads terminates too, because its assigned task completes. In the merging example, the threads created to determine the position of array elements a[i] and b[j] in the merged array terminate once the final positions are computed. Similarly, in the matrix multiplication example, once the value of C[i,j] is computed, the corresponding thread terminates. In general, when the assigned task of a thread completes, the thread may be terminated.

Moreover, if the parent thread terminates, all of its child threads terminate as well. Why is this important? We briefly mentioned in a above page that the child threads share resources with the parent thread, including variables. When the parent thread terminates, all of its variables are gone, and, as a result, the child threads will not be able to access to those resources that the parent thread owns. Thus, if the parent thread runs faster and terminates earlier than its child threads do, we have a problem! This is why we need the third thread management feature: thread join.

Thread Join

Imagine the following scenario. You are preparing for tomorrow’s final examine and feel a little hungry. So, you give your younger brother ten bucks and ask him to buy a pizza for you. In this case, you are the main thread and your brother is a child thread. Once your order is given, both you and your brother are doing their job concurrently (i.e., studying and buying a pizza). Now, we have two cases to consider. First, your brother brings your pizza back and terminates while you are studying. In this case, you can stop studying and enjoy the pizza. Second, you finish your study early and sleep (i.e., your assigned job for today – study for tomorrow’s final exam – is done) before the pizza is available. Of course, you cannot sleep; otherwise, you won’t have a chance to eat the pizza. What you are going to do is to wait until your brother brings the pizza back. This is exactly the problem and solution we mentioned at the end of the previous section.

Thread join is designed to solve this problem. A thread can execute a thread join to wait until the other thread terminates. In our case, you – the main thread – should execute a thread join waiting for your brother – a child thread – to terminate. In general, thread join is for a parent to join with one of its child threads. Thread join has the following activities, assuming that a parent thread P wants to join with one of its child threads C.

- When P executes a thread join in order to join with C, which is still running, P is suspended until C terminates. Once C terminates, P resumes.

- When P executes a thread join and C has already terminated, P continues as if no such thread join has ever executed (i.e., join has no effect).

A parent thread may join with many child threads created by the parent. Or, a parent only join with some of its child threads, and ignore other child threads. In this case, those child threads that are ignored by the parent will be terminated when the parent terminates.

Thread Yield

Suppose you run a number of programs at the same time on a computer. It is possible that some CPU hogs keep eating up the CPU cycles so that other programs may hardly run. Well, this may be a problem of the scheduling policy of the operating system. However, when we write our programs with multiple threads, we have to make sure that some threads will not occupy the CPU forever, or for a very long time, without relinquishing it. Otherwise, we will end up in the situation where one or two threads keep running while the others simply wait there for their turns. That is, we run our threads in a very “polite” way that once a while a thread takes some rest so that the CPU can be used by other threads. This is achieved by thread yield.

When a thread executes a thread yield, the executing thread is suspended and the CPU is given to some other runnable thread. This thread will wait until the CPU becomes available again. Technically, in process scheduler’s terminology, the executing thread is put back into the ready queue of the processor and waits for its next turn. The following shows an example, where a small circle indicates the execution of a thread yield, a small square means the control is transferred back, a solid arrow indicates thread execution, and a dashed line segment depicts a thread waiting for execution. Suppose we have three threads A, B and C. Initially, A is running and executes a thread yield sometime later. This causes A is suspended temporarily and the CPU is given to the next thread, say B. Then, B runs for a while and executes a thread yield. Because there are two threads that are ready to run, A and C, the thread system picks one to run. Suppose it is C. When C executes a thread join, the control may switch back to A or B; however, the diagram shows the control is given back to A. In this way, threads execute in a cooperative way.

Thread Suspend and Resume

Thread suspend and resume are two more thread management features. When a thread executes a thread suspend to suspend the execution of itself or another thread, the indicated thread will be suspended until the execution of a thread resume that releases the indicated thread. For example, suppose we have three threads A, B and C running concurrently. Then, thread A execute a thread suspend to suspend the execution of threadB. After this, we have only two threads A and C running concurrently. Note that even though both A and C are waiting for the completion of their own I/O activities and no thread is running, the suspended thread B cannot run. To run thread B again, one of the other threads must execution a corresponding thread resume. For example, thread C may execute a thread resume to resume thread B‘s execution. After this, all three threads are running concurrently.

Both thread yield and thread suspend cause the execution of a thread to be suspended. What is the difference? The difference is a big one! With thread yield, the yielding thread is put back to the ready queue and will run when its turn comes. Thus, a yielding thread is runnable if the CPU becomes free in the future, although it is suspended. With a thread suspend, the suspended thread is not in the ready queue, and, as a result, the scheduler will not be able to pick it up and let it run when the CPU becomes free. Instead, the execution of a suspended thread can be resumed only by a specific thread resume call.

Thread suspend/resume can be very useful. For example, suppose a program must handle five different tasks. The main program may create five threads, one for each task. Initially, all threads are suspended by the main program. Once a task comes, the main program just resumes the corresponding thread. After handling the task, the thread simply suspends itself. This may be more efficient that creating a new thread to handle the task and then terminating the thread. However, thread suspend and resume could post some problem. Suppose a thread acquire a lock so that it becomes the only thread that can access to a shared resource. If before the thread releases the lock, it is suspended by another thread. Should this happen, no other thread can access the shared resource until a thread resume the suspended thread for it to release the lock. Because of this potential problem, which may lead to a system deadlock, the use of thread suspend and resume is usually not recommended. Some systems such as the Pthread do not support thread suspend and resume.

What is difference between Daemon and Non Daemon Thread

In java we have two type of Threads :

Daemon Thread and User Threads. Generally all threads created by programmer are user thread (unless you specify it to be daemon or your parent thread is a daemon thread). User thread are generally meant to run our programm code. JVM doesn’t terminates unless all the user thread terminate.

On the other hand we have Daemon threads. These threads are generally ‘Service provider’ threads. They should not be used to execute your program code but system code. These thread run in parallel to your code but JVM can kill them anytime. When JVM finds no user threads it stops and all daemon thread terminate instantly. Thus you should never depend on daemon thread to perform any program code.

Events with Threading

Use events with thread; this is one of the techniques to synchronize one thread with other like ManualResetEvent

Difference between a computer process and thread

A single process can have multiple threads that share global data and address space with other threads running in the same process, and therefore can operate on the same data set easily. Processes do not share address space and a different mechanism must be used if they are to share data.

If we consider running a word processing program to be a process, then the auto-save and spell check features that occur in the background are different threads of that process which are all operating on the same data set (your document).

Process

In computing, a process is an instance of a computer program that is being sequentially executed[1] by a computer system that has the ability to run several computer programs concurrently.

Thread

A single process may contain several executable programs (threads) that work together as a coherent whole. One thread might, for example, handle error signals, another might send a message about the error to the user, while a third thread is executing the actual task

Interlocked class

Provides atomic operations for variables that are shared by multiple threads. Interlocked class provides methods by which you can achieve following functionalities :-

1. increment Values.

2. Decrement values.

3.Exchange values between variables.

4.Compare values from any thread.

in a synchronization mode.

Example :- System.Threading.Interlocked.Increment(IntA)

Pretty! This has been a really wonderful post. Thanks for supplying these details.

LikeLike

This is the right website for anyone who hopes to understand this topic. You realize so much its almost tough to argue with you (not that I personally would want to…HaHa). You certainly put a brand new spin on a topic which has been discussed for a long time. Great stuff, just great!

LikeLike

This truly answered my issue, thank you!

LikeLike

Many thanks for presenting this. Brief enquiry, if you wouldn’t mind. Where did you obtain your web-site template? I will be putting up a blog and rather like your site.

LikeLike

Hi There…

Thanks for liking the post… Well its the free template that is provide by WordPress under Appearance->Theme section. Just look for ‘INove’ theme you will find this….Thanks….

LikeLike

Amazing Post. thanks for share. far more wait .

LikeLike

Just to let you know your website looks a little bit strange on Safari on my netbook using Linux .

LikeLike

Thanks , I have just been searching for information about this subject for ages and yours is the greatest I’ve discovered till now.

However, what in regards to the bottom line? Are you certain in regards to the source?

LikeLike

I was curious if you ever thought of changing the page layout of your blog?

Itts very well written; I love what youve got to say. But

maybe you could a little more in the way of content so people could

connect with it better. Youve ggot an awful lot of text for only having one

or two images. Maybe yoou could space it out better?

LikeLike

Hey there terrific blog! Does running a blog like this require a large amount of work?

I have virtually no knowledge of programming but I had been hoping to start my own blog in the near future.

Anyway, should you have any suggestions or tips for new blog owners please share.

I know this is off topic however I simply needed to ask.

Appreciate it!

LikeLike

Greate article. Keep writing such kind of info on your blog.

Im really impressed by your site.

Hi there, You have done an incredible job. I will certainly digg it and personally recommend to my friends.

I’m sure they’ll be benefited from this web

site.

LikeLike